Explainable AI in US Healthcare: Transparency & Compliance

Explainable AI (XAI) is rapidly gaining traction in US healthcare, promising to revolutionize diagnostics and treatment while facing stringent new regulations expected by late 2026 to ensure transparency and compliance.

The landscape of modern medicine is undergoing a profound transformation, driven by the accelerating integration of artificial intelligence. Among these advancements, the rise of explainable AI (XAI) in US healthcare stands out as a critical development, promising to unlock unprecedented diagnostic precision and personalized treatment plans. As AI systems become more ubiquitous in clinical settings, the imperative for understanding their decision-making processes becomes paramount, not just for clinicians but also for patients and regulatory bodies. This shift towards transparent AI is not merely a technological evolution; it is a fundamental redefinition of trust and accountability in healthcare, particularly with new regulations anticipated by late 2026 that will solidify XAI’s role in ensuring ethical and compliant medical practices.

The imperative for explainable AI in clinical practice

As artificial intelligence continues its deep integration into US healthcare, the need for explainable AI (XAI) has moved from a theoretical concept to an absolute necessity. Black-box AI models, while powerful, pose significant challenges in a field where every decision can have life-altering consequences. Clinicians, patients, and regulators demand clarity, not just results, when AI is involved in diagnosis, treatment recommendations, or risk assessment.

This imperative stems from several key factors, including the need for clinical validation, ethical considerations, and the fostering of patient trust. Without understanding *why* an AI system arrived at a particular conclusion, healthcare providers cannot adequately challenge, refine, or even accept its output. This directly impacts their ability to provide the best possible care and to maintain their professional responsibilities.

Understanding the ‘Why’ behind AI decisions

The core challenge with traditional AI models, especially deep learning networks, is their inherent opacity. They can deliver impressive accuracy, but their internal workings are often too complex for human comprehension. XAI aims to bridge this gap, offering insights into the factors influencing an AI’s output. This transparency is crucial for several reasons:

- Clinical validation: Doctors need to confirm that an AI’s reasoning aligns with medical knowledge and patient history.

- Error detection: Understanding the decision process helps identify biases or flaws in the AI model, preventing misdiagnosis.

- Patient education: Explanations empower patients to understand their conditions and treatment options better.

- Legal and ethical accountability: In cases of adverse outcomes, knowing the AI’s rationale is vital for determining responsibility.

The ability to explain AI decisions allows medical professionals to move beyond simply trusting the technology. It enables them to critically evaluate the AI’s recommendations, integrate them with their own expertise, and ultimately make more informed and responsible decisions for their patients. This collaborative approach between human and AI is the future of intelligent healthcare.

Current landscape of AI in US healthcare: benefits and challenges

Artificial intelligence has already made significant inroads into various facets of US healthcare, demonstrating immense potential to improve efficiency, accuracy, and patient outcomes. From predictive analytics for disease outbreaks to AI-powered image analysis for early cancer detection, the benefits are clear and compelling. However, this rapid adoption also brings a unique set of challenges, particularly concerning the reliability and ethical implications of these advanced technologies.

The current state is characterized by a blend of groundbreaking innovation and a growing awareness of the complexities involved in deploying AI responsibly. While AI offers unparalleled opportunities, navigating its integration requires careful consideration of data quality, algorithmic bias, and the human element in care delivery.

Transformative applications of AI

AI’s impact spans numerous areas within healthcare. Its ability to process vast datasets quickly and identify subtle patterns often eluding human observation makes it an invaluable tool. Here are some prominent applications:

- Diagnostic imaging: AI algorithms can analyze X-rays, MRIs, and CT scans with remarkable speed and accuracy, assisting radiologists in detecting anomalies.

- Drug discovery: AI accelerates the identification of potential drug candidates and optimizes clinical trial design, bringing new treatments to market faster.

- Personalized medicine: By analyzing a patient’s genetic makeup, lifestyle, and medical history, AI helps tailor treatment plans for optimal efficacy.

- Predictive analytics: AI can forecast disease progression, identify patients at high risk of certain conditions, and optimize hospital resource allocation.

These applications underscore the transformative power of AI in enhancing medical capabilities and improving the quality of care. The efficiency gains and improved precision offered by AI are undeniable, setting the stage for a new era in healthcare delivery.

Navigating inherent challenges

Despite the promise, the current deployment of AI in healthcare is not without its hurdles. The ‘black box’ problem, where AI’s decision-making process is opaque, remains a significant concern. Other challenges include data privacy, cybersecurity risks, and the potential for algorithmic bias, which could exacerbate existing health disparities.

Moreover, the integration of AI into existing clinical workflows requires significant investment in infrastructure and training for healthcare professionals. Overcoming these challenges is crucial for realizing the full potential of AI while safeguarding patient welfare and maintaining public trust.

Expected US regulations: shaping the future of AI in healthcare

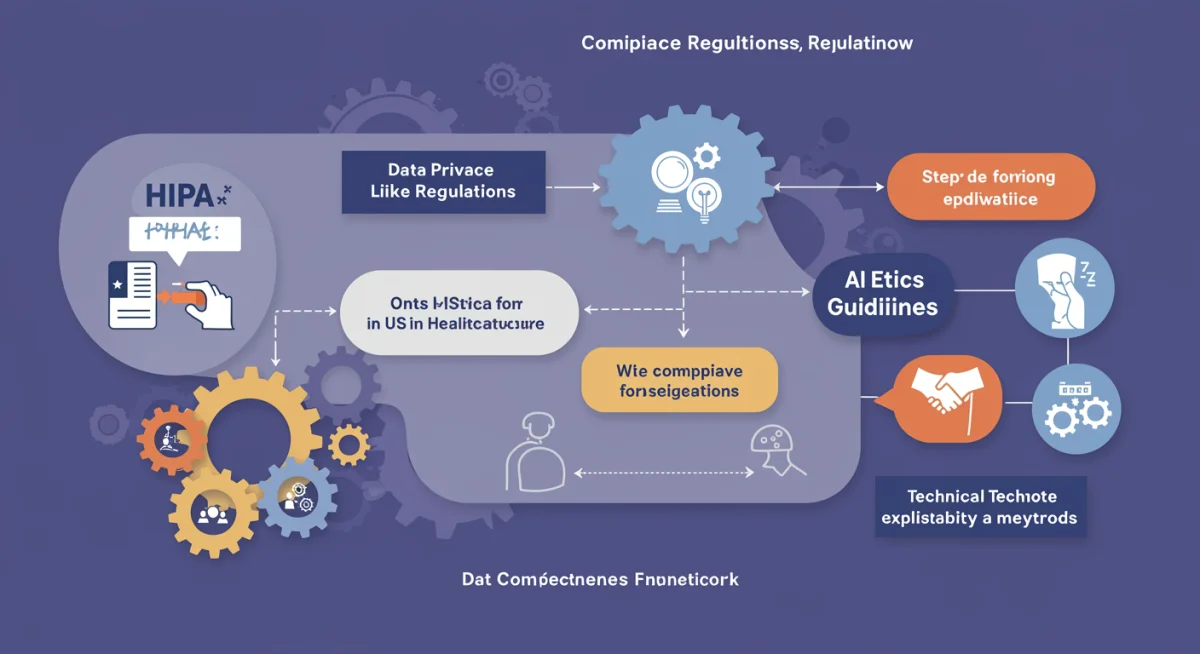

The regulatory landscape for artificial intelligence in US healthcare is on the cusp of significant change, with comprehensive guidelines and rules anticipated by late 2026. These forthcoming regulations are poised to fundamentally reshape how AI systems are developed, deployed, and monitored within clinical environments. The primary goal is to ensure that AI technologies are not only innovative but also safe, effective, transparent, and equitable, addressing widespread concerns about accountability and ethical use.

Key governmental bodies, including the FDA, NIST, and HHS, are actively involved in drafting these regulations, indicating a multi-faceted approach that will cover everything from data governance to algorithmic explainability. This proactive stance reflects a recognition that while AI offers immense benefits, unregulated deployment carries substantial risks.

Key regulatory areas and their impact

The upcoming regulations are expected to focus on several critical areas, each designed to instill greater confidence and control over AI’s role in healthcare. These areas will likely include:

- Data governance and privacy: Strict rules on how patient data is collected, used, and protected, building upon existing frameworks like HIPAA.

- Algorithmic transparency and explainability: Mandates for AI systems to provide clear, understandable explanations for their decisions, directly addressing the need for XAI.

- Bias detection and mitigation: Requirements for developers to actively test for and mitigate algorithmic biases that could lead to unfair or inaccurate outcomes for certain patient populations.

- Validation and monitoring: Protocols for rigorous pre-market validation and continuous post-market surveillance of AI models to ensure ongoing performance and safety.

These regulatory pillars will necessitate a shift in how AI is designed and implemented within the healthcare sector. Developers and healthcare providers will need to adopt new methodologies and tools to meet these stringent requirements, fostering a culture of responsible AI innovation.

Anticipated compliance challenges

While the regulations aim to improve patient safety and trust, they will also present considerable compliance challenges for AI developers and healthcare organizations. Meeting the new standards for transparency, bias mitigation, and continuous monitoring will require significant investment in technology, processes, and personnel.

Organizations will need to establish robust internal governance structures, conduct regular audits of their AI systems, and ensure that their staff are adequately trained to work with and interpret explainable AI outputs. The successful navigation of these challenges will be crucial for any entity looking to leverage AI in the future of US healthcare.

The role of XAI in ensuring transparency and trust

The fundamental promise of explainable AI (XAI) in US healthcare extends beyond mere technical functionality; it is about building and sustaining transparency and trust. In a field where human lives are at stake, the ability to understand an AI’s rationale is not a luxury but a necessity. XAI transforms opaque algorithms into comprehensible tools, fostering confidence among patients, clinicians, and regulatory bodies alike.

Transparency, enabled by XAI, allows for scrutiny, validation, and ultimately, accountability. This is particularly vital in healthcare, where the consequences of AI errors can be severe. By demystifying AI’s inner workings, XAI empowers stakeholders to engage with the technology on an informed basis, moving beyond blind reliance to intelligent collaboration.

Demystifying AI for better patient outcomes

XAI plays a pivotal role in making AI systems understandable to those who need it most – patients and their care providers. When a patient receives a diagnosis or treatment recommendation influenced by AI, they have a right to know the basis of that decision. XAI provides the tools to articulate this basis clearly, helping bridge the communication gap between complex algorithms and human understanding.

- Enhanced patient understanding: Patients can grasp why a certain diagnosis was made or why a particular treatment is recommended, leading to better adherence and engagement.

- Improved clinician confidence: Doctors can integrate AI insights with their clinical judgment, confident in their ability to explain and defend decisions.

- Reduced medical errors: Transparent AI allows for easier identification of erroneous or biased outputs, enabling timely correction and preventing harm.

This demystification process is essential for cultivating a healthcare environment where AI is seen as a trustworthy partner, not a mysterious black box. It ensures that technology serves humanity, rather than dictating without reason.

Building trust through accountability

Trust is the bedrock of the patient-provider relationship, and XAI is instrumental in extending this trust to AI-driven healthcare. When AI decisions are explainable, accountability becomes possible. If an AI system makes an incorrect recommendation, XAI can help pinpoint the factors that led to that error, allowing for investigation, learning, and improvement.

This level of accountability is crucial for regulatory compliance and for maintaining the ethical standards of medicine. It ensures that AI systems are not only technically proficient but also ethically sound and socially responsible. By fostering an environment of transparency and accountability, XAI ultimately strengthens the bond of trust between technology, providers, and patients.

Technical approaches to achieving explainability in AI

Achieving explainability in AI is not a singular task but rather a multifaceted endeavor involving various technical approaches. As AI models become increasingly sophisticated, particularly in deep learning, developing methods to interpret their decisions has become a vibrant area of research and development. These techniques aim to transform complex, opaque algorithms into more transparent and understandable systems, crucial for their adoption in sensitive domains like healthcare.

The choice of XAI technique often depends on the specific AI model, the type of data, and the context in which the explanation is needed. From global interpretability to local explanations, each method offers a different lens through which to understand AI behavior, helping to build confidence and facilitate regulatory compliance.

Diverse methods for XAI

Several technical approaches are employed to enhance the explainability of AI models. These methods can broadly be categorized into intrinsic explainability (where the model is inherently interpretable) and post-hoc explainability (where explanations are generated after the model has been trained). Both are vital for different use cases:

- LIME (Local Interpretable Model-agnostic Explanations): This technique explains the predictions of any classifier or regressor by approximating it locally with an interpretable model. It helps understand why a model made a specific prediction for a particular instance.

- SHAP (SHapley Additive exPlanations): Based on game theory, SHAP values explain the output of any machine learning model. It connects optimal credit allocation with local explanations, assigning each feature an importance value for a particular prediction.

- Feature importance: Simpler models often allow direct access to feature importance scores, indicating which input variables had the most significant impact on the model’s output.

- Rule-based systems: For certain problems, AI models can be designed to generate human-readable rules that lead to a decision, making their logic inherently transparent.

These techniques provide a diverse toolkit for developers and practitioners to make AI systems more transparent. The ongoing research in this field continues to yield more sophisticated and context-aware explanation methods.

Challenges in implementing XAI techniques

Despite the advancements, implementing XAI techniques in real-world healthcare scenarios presents its own set of challenges. One primary hurdle is the trade-off between model performance and interpretability; often, the most accurate models are the least transparent. Another challenge lies in ensuring that explanations are not only technically sound but also comprehensible to clinicians and patients.

Furthermore, the dynamic nature of healthcare data and the continuous evolution of AI models mean that XAI solutions must be robust and adaptable. The development of standardized metrics for evaluating explanation quality and consistency is an ongoing effort, crucial for widespread adoption and regulatory acceptance.

Preparing for compliance: strategies for healthcare providers

With new regulations for AI in US healthcare on the horizon by late 2026, healthcare providers must proactively prepare for compliance. This is not merely a legal obligation but an opportunity to embed ethical AI practices into the core of their operations, enhancing patient safety and operational efficiency. Preparation involves a multi-faceted strategy encompassing technological adoption, organizational restructuring, and comprehensive staff training.

Ignoring these impending changes could lead to significant penalties, reputational damage, and, most importantly, compromised patient care. Therefore, understanding and implementing robust compliance strategies is paramount for any healthcare institution leveraging or planning to leverage AI.

Developing an AI governance framework

A crucial first step for healthcare providers is to establish a comprehensive AI governance framework. This framework should outline clear policies and procedures for the entire lifecycle of AI systems, from procurement and deployment to monitoring and retirement. Key components of such a framework include:

- Ethical guidelines: Define principles for responsible AI use, including fairness, accountability, and transparency.

- Data management policies: Establish strict protocols for data collection, storage, processing, and security, ensuring compliance with HIPAA and other privacy regulations.

- Risk assessment and mitigation: Implement systematic processes to identify, evaluate, and mitigate potential risks associated with AI deployment, including algorithmic bias and privacy breaches.

- Roles and responsibilities: Clearly define who is responsible for AI oversight, validation, and maintenance within the organization.

Establishing such a framework ensures a structured and systematic approach to AI adoption, laying the groundwork for regulatory compliance and ethical operation.

Training and workforce development

The success of AI integration and compliance hinges significantly on the readiness of the healthcare workforce. Clinicians, IT staff, and administrators will all require specialized training to understand, utilize, and oversee AI systems effectively. This includes:

- AI literacy for clinicians: Educating medical professionals on how AI works, its capabilities, limitations, and how to interpret XAI outputs.

- Technical skills for IT teams: Training staff on deploying, maintaining, and monitoring AI systems, including troubleshooting and security protocols.

- Ethical AI training: Ensuring all relevant personnel understand the ethical implications of AI, including bias detection and mitigation strategies.

Investing in workforce development is critical to fostering an environment where AI is not just a tool, but an integral, trusted part of the healthcare ecosystem. Adequate training empowers staff to navigate the complexities of AI, ensuring both operational efficiency and regulatory adherence.

Future outlook: AI innovation meets regulatory harmony

The future of AI in US healthcare is poised at an exciting juncture where rapid innovation is expected to meet increasingly sophisticated regulatory frameworks. The anticipated regulations by late 2026 are not merely constraints but foundational elements designed to foster responsible growth and ensure that AI technology truly serves the best interests of patients and healthcare providers. This harmony between innovation and regulation will be critical in unlocking the full potential of AI while mitigating its inherent risks.

As the industry adapts to these new standards, we can anticipate a landscape where AI systems are not only powerful but also inherently trustworthy, transparent, and accountable. This will drive a new era of medical advancements, underpinned by ethical considerations and robust oversight.

Advancements driven by regulatory clarity

Rather than stifling innovation, clear regulations are likely to accelerate it in specific, beneficial directions. By setting clear boundaries and expectations, regulators provide a roadmap for developers to innovate responsibly. This clarity can lead to:

- Focus on ethical AI design: Developers will prioritize building explainable, fair, and secure AI systems from the ground up, integrating XAI principles into their core architecture.

- Increased investment in compliant solutions: The market demand for AI tools that meet regulatory standards will grow, encouraging investment in validated and transparent technologies.

- Standardization and interoperability: Regulations may drive the adoption of common standards for AI data, models, and explanations, fostering greater interoperability across healthcare systems.

This regulatory clarity will encourage a more mature and responsible approach to AI development, moving beyond experimental deployments to robust, clinically validated solutions.

The evolving role of human oversight

Even with highly explainable AI, human oversight will remain indispensable. The future will likely see a collaborative model where AI augments human capabilities rather than replacing them. Clinicians will evolve into ‘AI interpretability specialists,’ skilled in critically evaluating AI outputs and integrating them into complex patient care decisions.

This evolving role emphasizes the importance of continuous education and training for healthcare professionals. The synergy between advanced AI and expert human judgment will define the next generation of healthcare delivery, ensuring that technology remains a tool in the hands of compassionate and skilled practitioners. The ultimate goal is a future where AI empowers better healthcare decisions, leading to superior patient outcomes and a more efficient, equitable healthcare system.

| Key Aspect | Brief Description |

|---|---|

| XAI Importance | Crucial for understanding AI decisions, ensuring clinical validation, and building patient trust in healthcare. |

| Regulatory Outlook | New US regulations by late 2026 will mandate transparency, accountability, and ethical AI use in healthcare. |

| Compliance Strategies | Healthcare providers must develop AI governance frameworks and invest in workforce training for AI literacy. |

| Future Impact | Regulatory clarity will drive ethical AI innovation, fostering trust and improving patient outcomes through transparent systems. |

Frequently Asked Questions About Explainable AI in Healthcare

Explainable AI (XAI) refers to artificial intelligence systems that can provide clear and understandable reasons for their decisions, predictions, or recommendations. In US healthcare, XAI is crucial for clinicians, patients, and regulators to comprehend why an AI reached a particular conclusion, fostering trust and accountability in medical applications.

New regulations are being introduced to ensure the safe, ethical, and effective deployment of AI in healthcare. With AI’s growing influence, concerns about transparency, algorithmic bias, data privacy, and accountability necessitate clear guidelines. These regulations aim to protect patients and maintain public trust in AI-driven medical technologies.

XAI will significantly enhance patient trust and safety by demystifying AI decisions. When clinicians can explain AI-generated diagnoses or treatment plans, patients are more likely to understand and accept them. This transparency helps identify and mitigate potential errors or biases, strengthening the patient-provider relationship and improving overall care quality.

Healthcare providers will face challenges in establishing robust AI governance frameworks, ensuring data privacy, mitigating algorithmic bias, and validating AI models. Significant investment in technology, staff training, and continuous monitoring will be required to meet the stringent new standards for transparency and accountability.

Various technical approaches are employed, including LIME (Local Interpretable Model-agnostic Explanations) and SHAP (SHapley Additive exPlanations), which provide local explanations for individual predictions. Other methods include feature importance analysis and designing inherently interpretable rule-based systems, all aimed at making AI’s decision-making process transparent.

Conclusion

The trajectory of explainable AI in US healthcare is undeniably upward, driven by both technological potential and an increasing demand for transparency and accountability. As we approach the anticipated regulatory changes by late 2026, the emphasis on XAI will only intensify, solidifying its role as a cornerstone of ethical and effective AI deployment in medicine. These regulations, far from being obstacles, will serve as a vital framework, guiding developers and healthcare providers towards building AI systems that are not only powerful but also trustworthy, fair, and comprehensible. The collaborative efforts between innovators, clinicians, and policymakers will ultimately shape a future where AI enhances patient care, fosters confidence, and upholds the highest standards of medical ethics, leading to a more transparent and patient-centric healthcare ecosystem for all.